We have to get real about when human skills are better than AI… and if anybody cares.

I’m reluctant to loan out nonfiction books because I fill my books with marginalia arguments with authors. I worry that if people read these marked up books they’ll think I’ve slipped a cog. The same worry overtakes me when I read the news at a coffee shop: if I’m not careful, folks will hear me mutter, “bullshit… bullshit… bullshit.” Luckily, I wasn’t at Peet’s when I read Higher Education Plans for a Future Markedly Changed by A.I. (NYT $, Dec 10), part of the coverage from the DealBook Summit.

Seven college and university presidents discussed how AI is transforming higher education. Along the way, the speakers shared a bunch of nonsense. This nonsense isn’t just about AI and higher education, but the density of the lies we tell ourselves about AI was striking.

Here are a few examples:

Human skills vs. AI skills

Task force panelists agreed that in the new world of generative artificial intelligence, there is a greater need than ever to prepare students for an ever-changing workplace environment. “It’s really about, can we help you analyze complexity and be able to transform that complex thinking to all areas,” which will serve you throughout your life, said Carmen Twillie Ambar, president of Oberlin College.

True. But does an undergraduate education equip students to do this? Complexity comes from things that don’t fit neatly into conceptual boxes, and college degrees are about learning the insides of a particular disciplinary box—the major.

I’m also skeptical that many professors are capable of preparing students in this way. Non-tenured and adjunct professors are overwhelmed and scared that they’ll lose their jobs. Tenured professors have no incentive to change if they are happy doing what they’ve always done. Plus, many professors have no recent, direct experience of a non-academic workplace, so how can they “prepare students for an ever-changing workplace environment”?

The head-to-head fallacy

Daniel Diermeier, chancellor of Vanderbilt University, added: “It’s not so much the case, I think, that we’re going to have a radiologist that’s going to be replaced by an algorithm, but it’s going to be the radiologist that knows A.I. is going to replace the one that doesn’t.”

This is an infuriating new cliché. Head to head, sure, the radiologist who understands AI will be more successful than the radiologist who doesn’t, but from an institutionalpoint of view if one human radiologist plus AI equals five human radiologists without AI, that’s still four humans with expensive medical educations who won’t find work.

Communication, empathy, relationships

Stanford president Jonathan Levin said the goal of a college degree…

is to graduate students well equipped to use these [AI] tools as well as teach them “to be good at the human things that don’t involve technology, that involve communication and empathy and human relationships.”

The problems?

LLMs get better at communication with each passing update. Holding AIs to a standard of perfect communication is misguided. It’s like holding self-driving cars to a standard of perfect safety. All that we need with self-driving cars is that they are a lot safer than human-driven cars: they aren’t yet, but they will be. Likewise, all that we need from AI communication is that it’s no more mistake prone than human communication.

The old joke about the two guys fleeing a bear comes to mind. One guy puts on his running shoes. “You’ll never outrun the bear,” the other guys says. The first guy replies, “I only have to outrun you.”

Empathy: what is the economic value of human empathy compared to machine-emulated empathy? Is that value enough to make a corporation that only cares about shareholder wealth invest in that premium? Probably not. A human call center employee might be better at customer service than a chatbot, but the chatbot is cheaper and doesn’t ask for breaks or health insurance.

Also, in his 2016 book Against Empathy: the Case for Rational Compassion, Yale psychology professor Paul Bloom argued:

Empathy is biased, pushing us in the direction of parochialism and racism. It is shortsighted, motivating actions that might make things better in the short term but lead to tragic results in the future. It is innumerate, favoring the one over the many.

Empathy, in other words, might be the wrong example of a human trait being superior to an AI driven by logic.

Human relationships: I agree that human to human relationships are better than human to AI relationships. Likewise, a home-cooked meal is healthier and (if you’re a decent cook) tastier than McDonald’s.

But people still go to McDonald’s.

We humans are talented at imagining relationships where there are none, and AIs are talented at pretending. In previous Dispatches, I’ve shared many examples, and articles abound about humans having intense, sometimes romantic, sometimes deadly, and always invisibly one-sided relationships with AIs.

We are also masters of anthropomorphism—seeing human-like characteristics in things that aren’t human. This isn’t just AIs. People have seen a man in the Moon since there have been people.

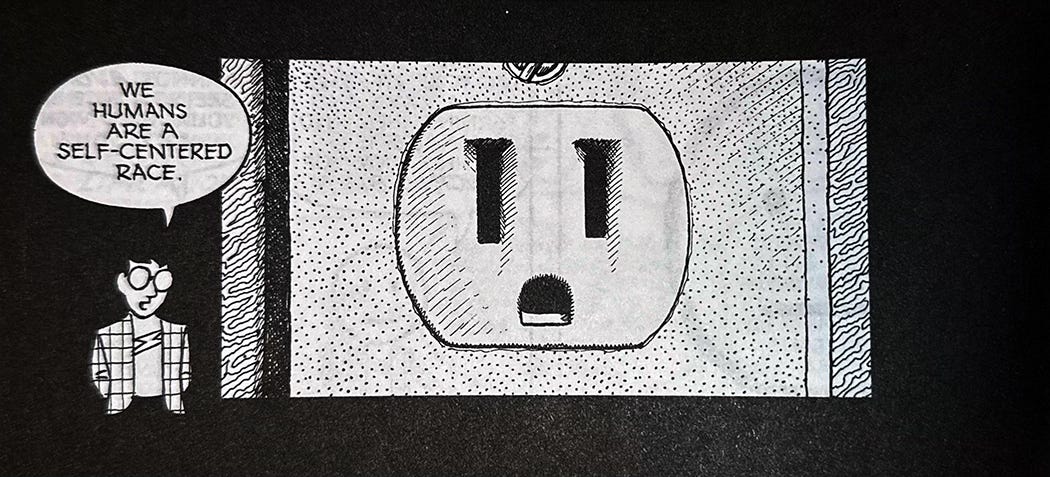

In his magnificent 1993 book Understanding Comics, Scott McCloud teaches a masters class in this kind of projection. Here’s one example where we see a human face in an electrical socket:

Final thoughts

It’s ominous when seven brilliant higher education leaders on a public stage offer platitudes when it comes to how AI is transforming education and human life in general.

We have to stop presuming that humans are better at things than AIs just because we happen to be human. We also have to stop presuming that humans will prefer other humans to AIs.

AIs exist to make things easy for humans. Unlike pesky other people, AIs have no needs or desires of their own.

Easy tends to win; hard tends to lose.

As the old saw goes, hope is not a strategy.

Note: if you would like essays like this one—plus a lot more goodies!—sent directly to your inbox, please subscribe to my free weekly newsletter.

* Image Prompt: “Please create a photorealistic image of a middle-aged white man looking in a mirror. His reflection is disheveled. He is unshaven. He has bags under his eyes. His tee shirt is rumpled. His hair is uncombed and greasy. Underneath the picture, put the caption, ‘I look great today’ in black letters on a white background.” I then edited the first result with “Remove the flannel collar from the man on the left. Make the hair on the man on the right more messy” to get the image above.

Leave a Reply